Agent-Memory — The key to salient episodic memory for AI Agents

Agent-Memory — The key to salient episodic memory for AI Agents

Unlocking Persistent Context: Solving AI Agent Amnesia with Advanced Episodic Memory Systems

Tired of your AI coding assistant forgetting crucial details after every session? Discover how a Rust-powered memory system can solve the amnesia problem and provide your AI with the persistent context it needs to boost your productivity! Dive into the future of AI with our latest article on Agent-Memory! #AIMemory #CodingAssistant

Summary: A Rust-powered, append-only, episodic, salient conversational memory system addresses the amnesia problem in AI coding assistants by providing episodic memory, allowing agents to recall past conversations and decisions. It distinguishes between library memory (static documents) and episodic memory (dynamic conversation history), utilizing a six-layer cognitive stack for efficient retrieval. Salience detection prioritizes important memories, while an append-only design ensures raw events are retained indefinitely. The system supports multi-agent memory sharing through various strategies, enhancing collaborative coding experiences. It also support index eviction to keep the most recent and most salient memories in the index without blowing up you RAM budget.

This is a work in progress. A side quest that I have been working on.

How a Rust-powered, append-only conversational memory system solves the AI agent amnesia problem and gives AI coding assistants persistent context

Introduction: The Amnesia Problem

Your AI coding agent forgot everything again.

Not last week’s architecture decision. Not the constraint your team established in January about never exposing secrets in logs. Not the careful approach you worked out together for the database schema migration that took three sessions to get right. Gone. Every new terminal session, every new conversation window: a clean slate. The agent greets you like a stranger.

This is not a bug in your agent. It is a fundamental gap in how AI coding assistants work today. They have powerful reasoning, deep programming knowledge, and sophisticated code generation. What they lack is episodic memory: the ability to remember what the two of you have done together. Without persistent AI agent context, every session is a reset.

The cost compounds quietly. You rebuild context every session. You re-explain constraints the agent already helped you establish. You re-discover decisions that took an hour the first time. Multiply that by 250 working days a year and you understand why the amnesia problem is a real productivity tax, not just a minor annoyance.

We have all experienced this. We updated AGENTS.md, add hooks, cuss, and complain but sometimes it just forgets something.

To fix this properly, start with one distinction: agents need two fundamentally different types of memory.

The first is library memory: knowledge about documents, code files, specifications, and domain concepts. Systems like agent-brain and spec driven development tools like BSD, BMAD, SuperPowers, etc., cover this. You index your codebase, feed it PDFs, and ask “what does our auth system do?” The agent retrieves a relevant chunk.

The second is episodic memory (conversational memory): knowledge about conversations themselves. Not what a document says, but what you and your agent discussed, decided, and built together. This is the memory you need when you ask “what approach did we settle on for the database schema last week?” or “what was that trick we used to fix the slow query in March?”

Library memory indexes static artifacts. Episodic memory captures a living transcript of collaborative work. Different data shapes, different access patterns, and different storage architectures require different systems.

Agent-memory is built for episodic memory. It captures every conversation event automatically through hooks, organizes those events into a time-hierarchical index called the Table of Contents (TOC), and retrieves relevant context through a six-layer cognitive search stack. It is not a document RAG system or a generic AI agent memory RAG alternative: it is a specialized journal for an agent’s working life, with an intelligent filing system attached.

Here is how the two systems compare:

One sentence summary: agent-brain gives an agent library memory. agent-memory gives an agent episodic memory for AI agents. Both are necessary. Neither replaces the other.

🚨🚨 WARNING — WORK IN PROGRESS / NOT PRODUCTION READY 🚨🚨

Okay — this article is more of a blog than an article talking about a polished product. It’s a stream-of-consciousness look into a project I’ve been building called agent-memory.

This is a background project I’ve been iterating on for a while. It’s full of ideas, experiments, and directions; but it is not finished.

I’m actively looking for people who want to:

- Give feedback

- Break things

- Help test and shape the system

- Potentially collaborate on building it out

⚠️ This will require effort. There will be trial and error. Things will change.

The core idea: build a fast, runtime memory system for agents that can:

- Remember salient topics

- Track preferences, tasks, and episodes

- Retain important context over configurable time windows

- Dynamically adjust what stays “important” in memory

But let’s be clear:

🚨 This is NOT a “ready-to-use tool”

🚨 This is NOT stable

🚨 This is NOT something you should rely on in production

This is an in-progress system that I’m putting out early on purpose.

I’m looking for:

- Smart people interested in agent memory systems

- Builders who want to experiment

- People willing to test, break, and iterate

- Anyone excited about pushing this space forward

If you’re just looking for something polished and ready; this isn’t it. Not yet anyway.

If you want something production-ready, check out other projects like agent-brain. We are promoting the use of agent-brain and want people to try it out and use it. It is ready for consumption.

👉 This project is different. This is collaboration-stage, not consumption-stage.

I’m sharing it because I want help from people smarter than me — or just motivated enough to try things out and give honest feedback.

So yeah… this is a side quest. It’s evolving. It’s rough.

And that’s exactly why I’m putting it out there.

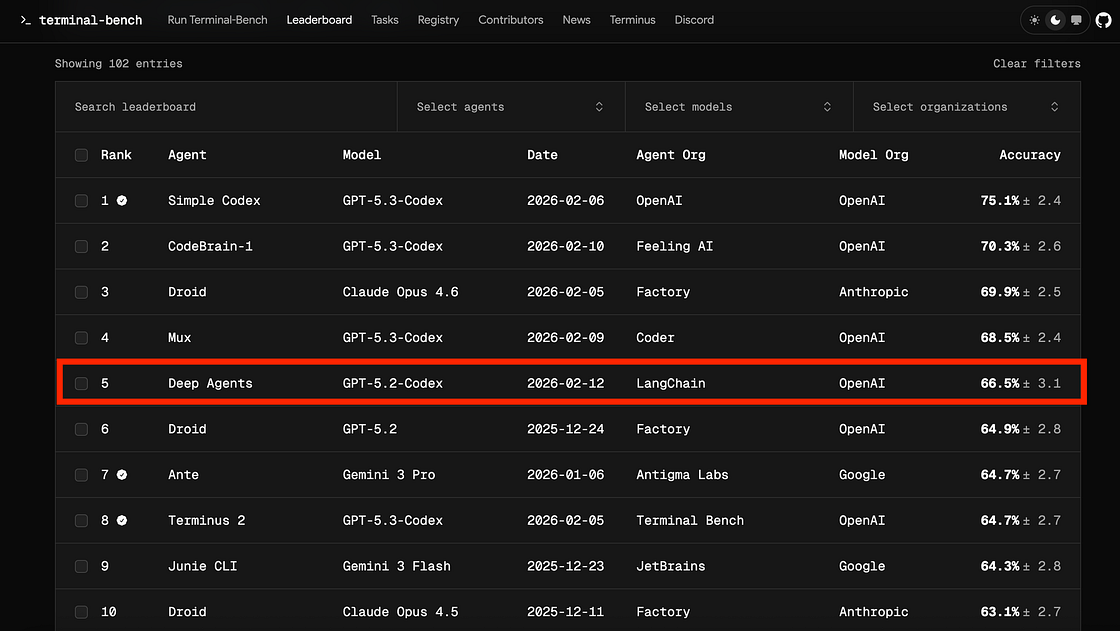

Here are some agent memory systems that you can use today:

- MemMachine

- Mem0

- OpenClaw (default memory)

- LangGraph / LangChain memory

- LlamaIndex memory

- AutoGPT memory

- CrewAI memory

I won’t compare AgentMemory systems to these because agent-memory is not done, but I will say it endeavors to be a better version of agent-memory than some of these other systems. Perhaps when it is further along a comparison article will be in order.

What agent-memory Does (or will do)

The core promise of agent-memory, stated in its own documentation: “Agent can answer ‘what were we talking about last week?’ without scanning everything.”

That phrase “without scanning everything” is doing a lot of work. The naive approach to an AI agent memory system is brute-force: dump all conversation history into a vector store and similarity-search at query time. This works until your history grows past a few thousand conversations. Then recall quality degrades, latency spikes, and retrieval becomes expensive.

Agent-memory takes a different approach. It builds a hierarchical summary structure over your conversations so an agent can navigate using O(log N) lookups instead of O(N) scans. It classifies memories by type so high-importance information survives longer and ranks higher. It layers search modalities intelligently: keyword search, semantic search, and graph traversal all feed into a ranking layer that surfaces the most relevant results.

The system captures six types of memory:

Observation

- Salience Boost: 0.0

- Examples: General conversation content

- Notes: Default; subject to staleness decay

Preference

- Salience Boost: 0.20

- Examples: “I prefer tabs over spaces”; “avoid Redux”

- Notes: Decay-exempt; remembered indefinitely

Procedure

- Salience Boost: 0.20

- Examples: Step-by-step workflows; “first do X, then Y”

- Notes: Decay-exempt; structural knowledge

Constraint

- Salience Boost: 0.20

- Examples: “Must use TypeScript”; “never expose secrets”

- Notes: Decay-exempt; highest priority recall

Definition

- Salience Boost: 0.20

- Examples: “Our ‘segment’ means a 30-minute window”

- Notes: Decay-exempt; project vocabulary

Episode

- Salience Boost: varies

- Examples: Task records: start, actions, outcome, lessons

- Notes: Value-score-based retention (Phase 44)

We are just looking for important things to remember. Later you can recall these without friction. The agent starts to remember your preferences, experiences, workflows, etc.

Observation is the default. Everything that does not match a higher-signal pattern becomes an observation. Preferences, procedures, constraints, and definitions get salience boosts and escape the staleness decay that dims older memories over time. Episodes are task-level records that capture structured execution history.

This taxonomy matters in practice. When your agent from six months ago decided that your team avoids a specific pattern, that constraint should still surface today. The AI agent memory system ensures it does.

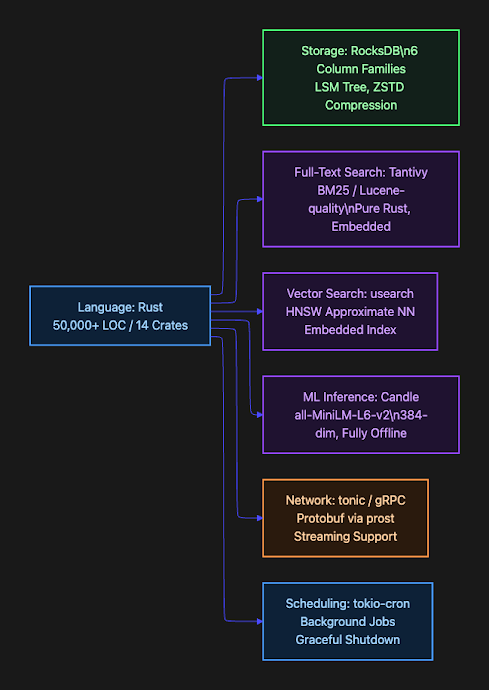

Tech Stack: Why Rust and RocksDB

The entire agent-memory system is written in Rust: approximately 50,000 lines across 14 crates in a Cargo workspace. This Rust AI agent framework reflects specific requirements that guided every dependency selection.

Rust was chosen for performance, memory safety, and the ability to run as a long-lived embedded daemon without a garbage collector pausing at inconvenient moments. An agent memory system needs to be invisible. If it adds latency or consumes memory unpredictably, developers stop using it. Rust’s zero-cost abstractions and deterministic memory model make it the right choice for a local background service.

RocksDB provides the persistent storage layer via the rust-rocksdb binding. It is an embedded key-value store that runs inside the daemon process: no separate database server to install, configure, or upgrade. RocksDB’s LSM tree structure is ideal for append-heavy workloads; writes are fast, and range scans over time-prefixed keys (the primary access pattern) are efficient. ZSTD compression keeps the storage footprint low.

The choice of RocksDB delivers an additional performance advantage through memory-mapped file architecture. RocksDB leverages the operating system’s page cache by memory-mapping its SST (Sorted String Table) files. This means that frequently accessed data remains in RAM without explicit caching logic in the application layer. The OS manages this transparently, keeping hot data in memory and evicting cold data as needed.

This architecture matters for Layer 2 (Agentic TOC Search). Even when falling back to index-free term matching, essentially grep-like scans over TOC summaries, the system remains fast because those summaries are likely already in the page cache. Modern hardware combined with RocksDB’s mmap strategy means that scanning through thousands of TOC nodes to find keyword matches happens at memory speeds, not disk speeds.

The result: even the slowest fallback path in agent-memory is still acceptably fast. The system degrades gracefully under load or during index rebuilds, never becoming unusable.

Six column families partition the data logically within one RocksDB instance:

events

- Raw conversation events (immutable, ZSTD compressed)

toc_nodes

- Versioned TOC summaries (append-versioned, not mutated)

toc_latest

- Pointers to latest TOC node version

grips

- Excerpt provenance records

outbox

- Background job work queue (FIFO compaction)

checkpoints

- Per-job progress for crash recovery

Tantivy handles full-text search. It is a Lucene-quality BM25 search engine written entirely in Rust. The BM25 index covers TOC node summaries and grip excerpts. When an agent asks “find sessions where we discussed the authentication flow”, Tantivy teleports directly to relevant TOC nodes without scanning every event. Critically, the Tantivy index is disposable: it can be fully rebuilt from RocksDB at any time. RocksDB is the source of truth.

usearch provides approximate nearest-neighbor search using the HNSW algorithm. This is the vector search layer for semantic queries. Like the BM25 index, it is derived from and rebuildable from RocksDB.

Candle is Hugging Face’s ML framework written in Rust. agent-memory uses it to run the all-MiniLM-L6-v2 model locally, producing 384-dimensional embeddings for every conversation event and TOC summary. No external API calls. No API keys. No network dependency for embedding generation. The system works fully offline; embeddings are deterministic, and there are no embedding costs.

💡 Note: All embedding inference runs locally via Candle. You do not need an OpenAI API key or any network connection to use semantic search. The all-MiniLM-L6-v2 model runs on CPU with acceptable performance for background indexing.

tonic provides the gRPC server and client. All communication between clients (plugins, adapters) and the daemon uses protobuf-defined RPCs. This gives strong typing, binary efficiency, streaming support, and generated client stubs for multiple languages.

tokio-cron-scheduler handles the background job pipeline: TOC summarization rollups, BM25 index sync, vector index sync, topic graph refresh, and retention cleanup. CancellationToken enables graceful shutdown without leaving indexes in inconsistent states.

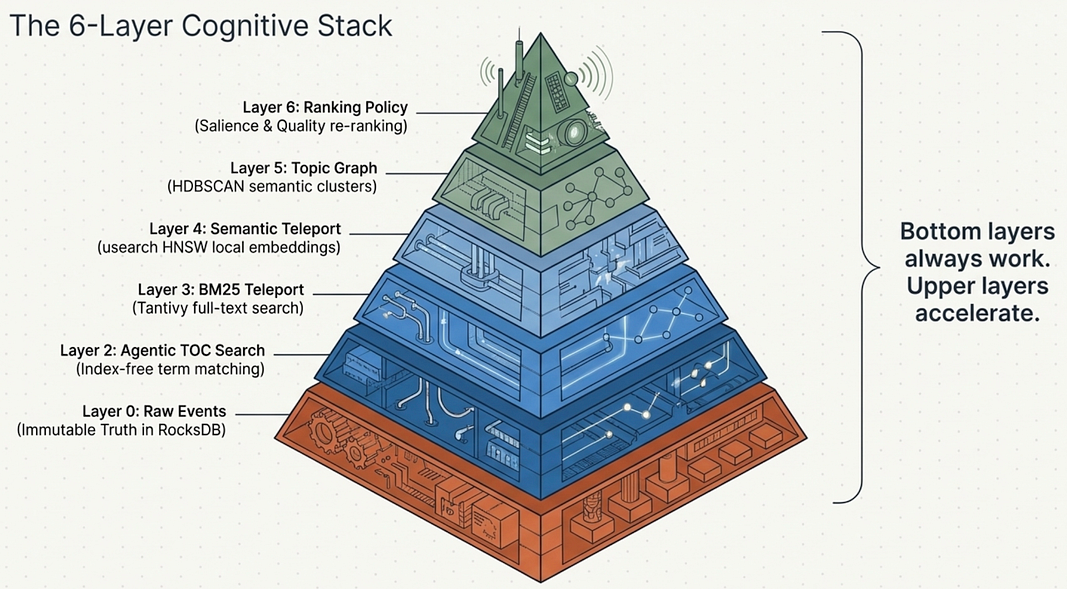

Architecture: The 6-Layer Cognitive Stack

The central architectural principle of agent-memory is this:

💡 Key Design: “Indexes are accelerators, not dependencies.” The TOC hierarchy is always available. Every other layer is optional and degrades gracefully. The system never fails completely just because a secondary index is missing or being rebuilt.

This principle shapes the entire retrieval stack. There are six layers, each adding capability while remaining optional beyond layer one.

Six-layer pyramid diagram of AI cognitive memory architecture: from raw events at the base to ranking policy at the apex.

Think of these layers as a pyramid of progressively smarter search. The bottom layers always work. The upper layers accelerate and refine.

Layer 0: Raw Events is the immutable truth layer. Every conversation event is stored exactly once with a time-prefixed key: evt:{timestamp_ms}:{ulid}. These events are never modified, are ZSTD-compressed, and are range-scannable. Every other layer is built from this foundation.

Layer 1: TOC Hierarchy converts raw events into navigable summaries. Background jobs periodically summarize conversations into a Year > Month > Week > Day > Segment tree. Each TOC node contains a title, bullet points summarizing key topics, keywords, and links to child nodes. An agent navigating this tree follows what the system calls Progressive Disclosure Architecture (PDA): start at Year, drill to Month, then Week, then Day, then Segment, reading summaries at each level and deciding whether to go deeper. This is O(log N) versus brute-force O(N) scanning.

Layer 2: Agentic TOC Search provides index-free search within the TOC structure. The SearchNode and SearchChildren RPCs traverse the TOC tree doing term matching against summaries. This is slower than indexed search, but it has zero external dependencies and always works, even if the BM25 and vector indexes are being rebuilt.

Layer 3: BM25 Teleport is where Tantivy comes in. Instead of navigating the TOC tree level by level, BM25 search teleports directly to the most relevant TOC nodes and grips based on keyword matching. A query like “authentication session timeout” goes directly to the relevant day or segment nodes.

Layer 4: Semantic Teleport adds embedding-based similarity. The usearch HNSW index finds TOC nodes and grips that are semantically related to the query, even when they use different keywords. The results of BM25 and semantic search are fused using reciprocal rank fusion (RRF) for hybrid retrieval.

Layer 5: Topic Graph operates above individual conversations. HDBSCAN clusters all conversation embeddings into named topics. LLM calls label each topic and assign importance scores. A time-decay function dims older topics. This layer answers questions like “what themes have I been working on this month?” by routing through topic clusters rather than time-based navigation.

Layer 6: Ranking Policy is the final quality layer. It applies the salience formula, usage-based decay, and novelty gating to re-rank results. High-salience memories (constraints, definitions, procedures) rank higher. Frequently-recalled items get slight deprioritization to surface less-seen but relevant content. Very similar results get filtered for novelty.

The Retrieval Brainstem sits alongside this stack as the decision router. It classifies query intent (Explore, Answer, Locate, Time-boxed), detects which capability tiers are available, builds a search plan, executes fallback chains if a tier fails, and applies stop conditions to prevent unnecessary work. The brainstem is what makes this AI agent memory system behave intelligently rather than executing every layer for every query.

Key Concepts: TOC, Grips, Segments, Outbox

Understanding four core concepts unlocks the rest of the system.

Table of Contents (TOC) is the primary navigational data structure. It is a time-indexed hierarchy: Year at the root, then Month, then Week, then Day, then Segment at the leaves. Each node contains an LLM-generated summary with a title, bullet points covering key topics, and keywords. Because it is time-based, navigating it is always predictable: you know where to look for “last Tuesday” without any index.

The Progressive Disclosure Architecture (PDA) built on top of the TOC lets an agent navigate like a human scanning a table of contents. Start at Year. “Is this the right year?” Yes. Drill to Month. March. Drill to Week. The week of March 10. Drill to Day. “March 15th summary looks relevant.” Drill to Segment. Read the actual conversation context. At each level, the agent reads a compact summary and decides whether to continue drilling or pivot laterally. This is the human reading pattern applied to machine memory.

Grips are provenance records. A grip is an excerpt from raw events anchored to a specific bullet point in a TOC summary. When a Day node says “Discussed: using HNSW for approximate nearest-neighbor search”, the grip is the actual message exchange that produced that bullet. Grips link the compressed summary layer to the immutable truth layer.

This matters for reliability. Without grips, an agent citing a TOC summary has no way to verify the claim. With grips, it can expand any bullet point back to the original conversation. Salience fields were added to grips in Phase 16, so grips now carry their own importance scores.

Segments are the leaf level of the TOC hierarchy. A segment groups conversation events by time (default: 30-minute gap between events ends a segment) or by token count (default: 4,000 tokens, with 500-token overlap into the next segment for context continuity). The token-based splitting ensures no segment is too large for LLM summarization. The overlap prevents hard context cuts at segment boundaries.

The Outbox Pattern handles crash recovery. When a conversation event arrives, it is written atomically to two places simultaneously: CF_EVENTS (the immutable record) and CF_OUTBOX (a work queue entry). Background jobs drain the outbox, processing events into TOC nodes, BM25 index entries, and vector index entries. A CF_CHECKPOINTS column family tracks processing progress per job type. If the daemon crashes mid-processing, the checkpoint tells it exactly where to resume. No events are lost. No events are double-processed.

Agentic Based Temporal Search based on PDA

The search strategy built on the TOC hierarchy is fundamentally agentic, mirroring the Progressive Disclosure Architecture (PDA) that agent skills employ. The agent doesn’t blindly execute a fixed retrieval plan; it reads, decides, and adapts. Teleportation might take the agentic search to a point in time, but even if it does not the agentic search can always rely on the ability to do temporal based searches.

Agentic Navigation Pattern: The agent starts at a high-level TOC node (Year or Month). It reads the summary. Based on what it sees, it makes a decision: “Is this relevant? Should I drill down to the next level (Week, then Day, then Segment)? Or should I move laterally to the next Month or Year?” This is the same pattern a human uses when scanning a book’s table of contents.

The agent can also dynamically adjust its search scope. If a query is time-sensitive (“What did we discuss in the last 10 hours?”), the agent can skip high-level nodes entirely and go straight to the most recent Day or Segment nodes. If the query is exploratory (“What were the main themes last month?”), it might stay at the Week or Month level and read summaries without drilling to raw events.

Hybrid Agentic + Grep Strategy: The agentic TOC search can be combined with targeted grep-like queries. For example, the agent might decide: “I need to scan the last three weeks for mentions of ‘authentication timeout’.” It navigates to the Week nodes for the past three weeks, then uses SearchNode or SearchChildren RPCs to perform term matching across those specific nodes. This is more efficient than a brute-force scan of all events, because the agent narrows the search space first using the TOC structure.

The Retrieval Brainstem orchestrates these decisions. It classifies the query intent (Explore, Answer, Locate, Time-boxed), detects which search tiers are available (TOC-only, BM25, semantic, topic graph), and builds a search plan. If BM25 is unavailable, the brainstem falls back to agentic TOC search. If the query is time-boxed (“last 10 hours”), it routes directly to recent Day or Segment nodes and skips higher levels entirely.

This agentic approach is what makes the system feel intelligent. The agent isn’t just retrieving; it’s navigating, deciding, and adapting its strategy based on what it finds.

💡 Pro Tip: The outbox and checkpoint pattern means you can safely kill the daemon at any time. It will resume exactly where it left off when restarted. You do not need to worry about corrupting the memory system by shutting it down abruptly.

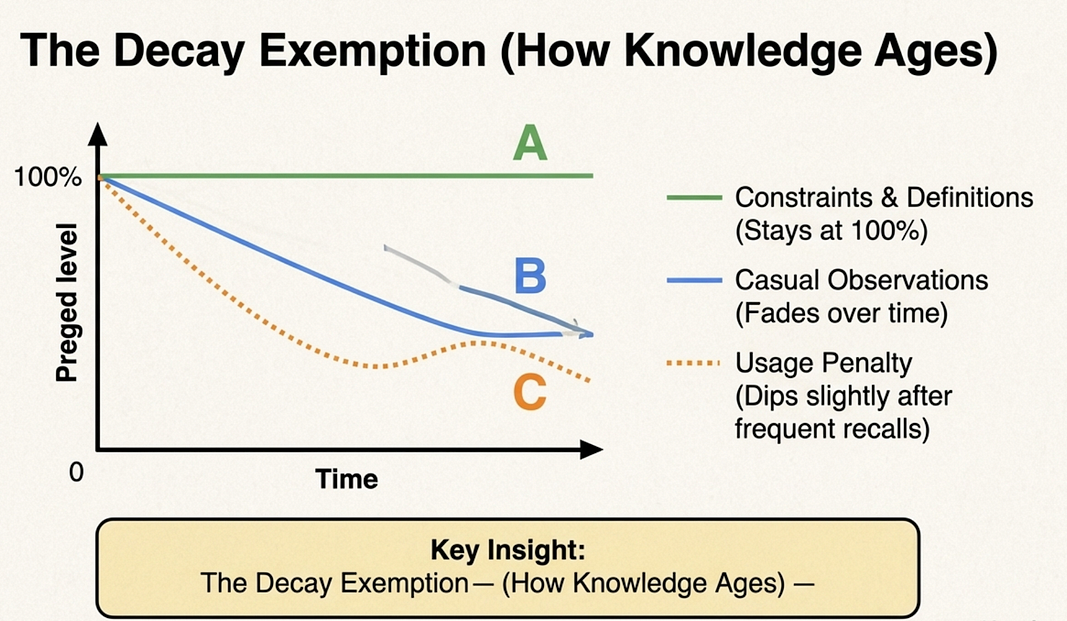

Salience Detection: Teaching Agents What Matters

Not all memories are equally important. The conversation where your team decided to never use global state is more important to recall than the conversation where you asked the agent to explain a function you already understood. An AI agent memory system that treats both equally will bury critical constraints under mundane observations.

Agent-memory solves this with salience detection: a scoring system that classifies importance at write time, stores it immutably on TOC nodes and grips, and incorporates it into retrieval-time ranking.

Why compute salience at write time rather than at query time? Because the system uses an append-only model. Immutable events produce immutable salience scores. There is no reindexing as the corpus grows and no per-query computational overhead.

The write-time formula combines three signals:

salience = length_density + kind_boost + pinned_boost

length_density = min(text.len() / 500, 1.0) * 0.45

kind_boost = 0.20 if MemoryKind is high-signal else 0.0

pinned_boost = 0.20 if is_pinned else 0.0length_density captures the intuition that denser, longer content tends to be more substantive. A single-line exchange (“yes, sounds good”) gets a low density score. A multi-paragraph technical discussion gets a high score. The value is capped at 1.0 and scaled by 0.45, so density alone contributes at most 0.45 to the final salience score.

kind_boost adds 0.20 for high-signal memory kinds. The MemoryKind classifier inspects conversation content for signal patterns and assigns a category:

Constraint

- Boost: 0.20

- Signal Patterns: “must”, “should”, “need to”, “required”

- Staleness Decay: Exempt

Definition

- Boost: 0.20

- Signal Patterns: “is defined as”, “means”, “we call”

- Staleness Decay: Exempt

Procedure

- Boost: 0.20

- Signal Patterns: “step”, “first”, “then”, “follow”

- Staleness Decay: Exempt

Preference

- Boost: 0.20

- Signal Patterns: “prefer”, “like”, “avoid”, “don’t use”

- Staleness Decay: Exempt

Observation

- Boost: 0.00

- Signal Patterns: everything else (default)

- Staleness Decay: Subject to decay

When the classifier sees “we must always validate input before calling the external API”, it recognizes “must” as a constraint signal and assigns Constraint kind. That memory gets a 0.20 boost and becomes exempt from staleness decay. Six months later, it still ranks at full strength.

pinned_boost adds another 0.20 when content has been explicitly pinned by the user. Pinning is a manual override for the classifier, useful when the content pattern is not obvious but the memory is clearly important.

A constraint that is long, dense, and pinned can reach a salience score of 0.45 + 0.20 + 0.20 = 0.85. A brief casual observation sits near zero.

At retrieval time, the ranking formula combines similarity with salience and a usage correction:

salience_factor = 0.55 + (0.45 * salience_score)

usage_penalty = 1.0 / (1.0 + decay_factor * access_count)

combined_score = similarity * salience_factor * usage_penaltysalience_factor scales from 0.55 (zero salience) to 1.00 (maximum salience). Even a zero-salience observation can still rank well if it is highly similar to the query. High-salience memories get a multiplicative boost on top of their similarity score.

usage_penalty prevents high-value memories from dominating every query once they have been recalled many times. As access_count grows, the penalty increases, slightly deprioritizing frequently-recalled items. This surfaces less-recalled but relevant content the agent may not have seen recently.

Decay exemption

The decay exemption for high-salience kinds is the most important rule in the system. Observations gradually dim as they age; staleness decay reduces their effective similarity weight over time. Constraints, definitions, procedures, and preferences are exempt. A constraint you set 18 months ago still ranks at full strength. An observation from 18 months ago is significantly dimmed.

💡 Key Design: The decay exemption encodes an important assumption: “your project’s constraints don’t expire; your casual observations do.” This is the memory system making an opinionated choice about what kind of knowledge ages well and what does not.

Memory Eviction: Retention Without Deletion

The append-only design means agent-memory never deletes raw events under normal operation. Events written to CF_EVENTS stay there. This simplifies crash recovery, provides an audit trail, and means you always have access to the original conversation transcript.

Retention policies operate on the derived indexes, not on raw data. When a retention policy runs, it prunes entries from the BM25 index and the vector index. The raw event survives. You lose searchability for old content, not the content itself. It can always be accessed via agentic search but loses the ability for teleportation.

💡 Note: Even after index pruning, raw events inCF_EVENTSremain intact. You can rebuild indexes from scratch at any time usingmemory-daemon admin rebuild-index. Old memories become unsearchable before they become unavailable.

The retention matrix is tiered by TOC level. Granular data (segments, daily grips) ages out faster. High-level summaries (week, month, year nodes) are retained much longer or indefinitely. This mirrors how human memory works: you forget the exact words of a conversation from last year, but you remember the gist.

Vector Index Retention

- Segment: 30 days

- Grip: 30 days

- Day node: 365 days

- Week node: 5 years

- Month node: Never pruned

- Year node: Never pruned

BM25 Index Retention

- Segment: 30 days

- Day node: 180 days

- Week node: 5 years

- Month node: Never pruned

- Year node: Never pruned

Automated cleanup runs via memory-daemon admin cleanup. The --dry-run flag shows what would be pruned without actually pruning it.

💡 Pro Tip: Always run memory-daemon admin cleanup --dry-run before your first live cleanup. The output shows exactly which index entries would be removed and at which TOC levels, so you can tune retention configuration before committing.Retention is configurable per project: forever (default for most levels), 90 days, 30 days, or custom durations. Archive strategies include compress-in-place, export to file, or hard delete.

Topic graph pruning uses exponential time decay with a 30-day half-life. A topic that was active three months ago has decayed to 12.5% of its original importance. Topics that fall below the importance threshold during the weekly compaction job are pruned from the graph. This keeps the topic graph focused on current work rather than accumulating every topic forever.

Episodic memory pruning (Phase 44) applies a value-score threshold to Episode-kind memories. Episodes below the threshold are pruned from long-term storage during compaction. The value score combines the episode’s salience, outcome quality, and recency.

GDPR mode enables full data removal: no tombstones, complete deletion from all storage layers. Audit logging tracks every deletion event for compliance reporting.

Multi-Agent Memory Sharing

Most developers use more than one AI coding agent. Claude Code for complex architecture work, Cursor for quick edits, Copilot inline completions. Conversational AI context retention across multiple agents is a real challenge; agent-memory supports three strategies for handling memory across multiple agents, and you choose based on how much cross-agent sharing you want.

Strategy 1: Separate Stores (default)

Each agent gets its own physical RocksDB directory:

~/.memory-store/

claude-code/

cursor-ai/

vscode-copilot/

gemini-cli/

codex-cli/Physical isolation. One agent’s memory cannot affect another’s. Easy to delete: remove the directory to wipe one agent’s history cleanly. Project identification uses git repository root by default, falling back to a CWD hash if not in a git repo. Override with MEMORY_PROJECT_ID. We can store conversations by agent or project.

Strategy 2: Unified Store with Agent Namespacing

One shared RocksDB with an agent_id field on every event. Cross-agent queries become possible: you can ask "what did any agent discuss about the auth system this month?" This is useful when you want a single view of all agent activity on a project.

Strategy 3: Team Mode

Multiple users share a network-accessible daemon. Conversation memory from one developer’s Claude Code session can be recalled by another developer’s session. This enables cross-user knowledge discovery: “what has the team been working on this sprint?” without manually writing status updates.

Separate Stores

- Isolation: Full physical

- Cross-Agent Queries: No

- Multi-User: No

- Use When: Default; clean separation per agent

Unified + Namespacing

- Isolation: Logical (agent_id)

- Cross-Agent Queries: Yes

- Multi-User: No

- Use When: One developer, multiple agents

Team Mode

- Isolation: None

- Cross-Agent Queries: Yes

- Multi-User: Yes

- Use When: Shared project context across developers

v2.7 shipped a Rust-native installer (the memory-installer crate) that generates correct configuration for five runtime targets in one pass: Claude Code, OpenCode, Gemini CLI, Copilot CLI, and Codex CLI. Previously, configuring each runtime required manual adapter setup. The installer handles all five automatically.

To support sharing from dev machine, we may end up using Kafka and/or NATS streaming. Then it is just a matter of subscribing and sharing streams. This is not in the current plans. But it is designed for this event stream architecture.

Integration: Claude Code Memory Plugin and Adapters

Agent-memory integrates with AI coding agents through a plugin and adapter layer. The daemon runs locally; plugins connect agents to it.

memory-setup-plugin is the Claude Code memory plugin for installation and configuration. It provides three commands: /memory-setup (first-run wizard), /memory-status (health check), and /memory-config (configuration management). It includes a setup-troubleshooter agent for diagnosing common problems, and two wizard skills: memo-retention-wizard for configuring retention policies and memo-multi-agent-wizard for setting up multi-agent memory sharing.

memory-query-plugin is the Claude Code plugin for active memory queries. It provides four skills:

memory-query: Tier-aware retrieval with automatic fallback chains. Asks the Retrieval Brainstem to find relevant memories for the current session context.memory-search: Explicit search with topic or keyword filtering and period-based filtering ("search last month").memory-agents: Configuration wizard for multi-agent sharing scenarios.topic-graph: Semantic topic exploration; shows what themes have been active recently and lets you browse by topic rather than by time.

These skills correspond to the /memory-query, /memory-search, and /topic-graph commands in Claude Code.

Adapters for other runtimes follow the same pattern: hooks capture events and forward them to the daemon as IngestEvent gRPC calls. The adapter intercepts conversation events from the host agent's hook system and translates them into the agent-memory protobuf format.

memory-gemini-adapter: Gemini CLI integrationmemory-copilot-adapter: GitHub Copilot CLI integrationmemory-opencode-plugin: OpenCode integration

The gRPC API is the universal contract. Any agent runtime can integrate by implementing the hook-to-IngestEvent translation.

Claude Code is supported by default. Codex does not currently have a callback mechanism. It can read from agent-memory but currently there is no way for it to contribute conversational state. It should be easy to add support for Cursor in the future.

Also, we added LangChain DeepAgent to the roadmap.

Design Decisions: The ADRs as Design Stories

Agent-memory’s architecture is documented through Architectural Decision Records (ADRs). These are not dry technical documents. Each ADR captures a real problem, the options considered, and the reasoning that drove the choice. Reading them reveals the intellectual history of the system.

ADR-001: Append-Only Storage

The team faced a fundamental choice: allow event mutation (simpler for some operations) or commit to immutability (simpler for everything else). They chose immutability.

The payoff is substantial. Crash recovery becomes simple: replay the outbox from the last checkpoint and resume. There is a natural audit trail. Caching is safe because immutable data never invalidates. There are no tombstone records cluttering queries. The one difficult trade-off is privacy: GDPR requests require a full database wipe rather than targeted record deletion, unless GDPR mode is explicitly enabled. The team accepted that trade-off because the operational simplicity of immutability was worth it for the primary use case.

ADR-002: TOC-Based Navigation Over Pure Vector Search

This is the most architecturally distinctive decision in the system. Many teams building an AI agent memory system default to “put everything in a vector store and semantic-search it.” The agent-memory team rejected that as the foundation.

Their reasoning: vectors are “teleport accelerators” that skip levels in the TOC hierarchy, not the foundation itself. The TOC ensures the system always works, even when the vector index is being rebuilt or has never been built. Time is a universal organizing principle for conversations: “last Tuesday” always has a meaningful location in the TOC, regardless of what words were used in those conversations. A pure vector approach loses this navigational guarantee.

ADR-003: gRPC Only

No HTTP/REST API. The choice was deliberate: strong typing via protobuf definitions eliminates a class of serialization bugs. Binary efficiency over JSON matters for a high-throughput ingestion path. Built-in streaming handles large result sets without custom pagination logic. Generated client stubs in multiple languages keep the integration surface consistent. The trade-off is that gRPC clients are less ubiquitous than HTTP clients; you cannot use curl to query the daemon. The team decided that developer convenience with direct curl calls was less important than the long-term reliability benefits of typed interfaces.

ADR-004: RocksDB as the Single Storage Backend

An embedded key-value store eliminates the database server deployment problem. No PostgreSQL instance to manage, no connection pool to configure, no separate backup process. RocksDB runs inside the daemon process. The LSM tree structure is well-matched to the append-heavy workload. Six column families within one RocksDB instance provide logical separation between events, TOC nodes, grips, outbox, and checkpoints. The system deploys as a single binary with no external dependencies.

ADR-005: Grips for Provenance

Every TOC summary bullet is backed by a grip that links to source events. This decision addresses a trust problem: agents citing memory summaries should be able to verify those claims. Without grips, an agent might confidently state a constraint that was actually paraphrased incorrectly in the summary. With grips, it can expand any bullet back to the exact raw conversation. This matters most for Constraint and Definition memories: you want to know precisely when and why a constraint was established, not just that it exists.

ADR-007: Tantivy for BM25

Embedded, pure Rust, Lucene-quality BM25. The index is disposable and always rebuildable from RocksDB. No Elasticsearch or OpenSearch cluster to operate. Tantivy runs inside the daemon process. The choice reflects a consistent theme across all ADRs: prefer embedded, rebuildable components over external services.

ADR-008: Per-Project Stores

Default isolation prevents project cross-contamination. Each project directory gets its own RocksDB instance, typically keyed by git repository root. Deleting a project’s memory is a directory removal, not a database query.

The core philosophical principle binding all these decisions together:

💡 Key Design: “Tools don’t decide, skills decide.” The memory substrate (daemon, gRPC API, data plane) provides capabilities deterministically. Agentic skills (the query plugins, the wizard skills, the retrieval brainstem) encode the decision logic. The substrate stays reliable and stable; behavior evolves through skill updates without touching the core data layer.

This separation means you can improve how the system decides to search without risking the integrity of the stored events. The data plane and the control plane are kept distinct by design. That is not just an architectural preference; it is what allows the system to evolve without breaking production deployments.

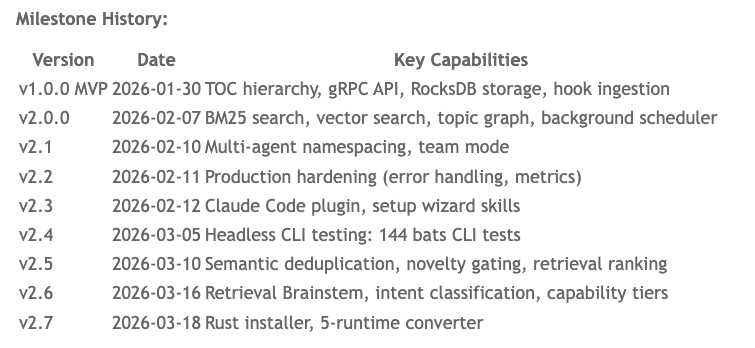

Current Status: v2.7 and Beyond

Agent-memory reached v2.7 on 2026–03–18. The project has moved well past MVP into trying to get to active production hardening.

Milestone History:

v1.0.0 (MVP) — 2026–01–30

- TOC hierarchy

- gRPC API

- RocksDB storage

- Hook ingestion

v2.0.0–2026–02–07

- BM25 search

- Vector search

- Topic graph

- Background scheduler

v2.1–2026–02–10

- Multi-agent namespacing

- Team mode

v2.2–2026–02–11

- Production hardening (error handling, metrics)

v2.3–2026–02–12

- Claude Code plugin

- Setup wizard skills

v2.4–2026–03–05

- Headless CLI testing (144 bats tests)

v2.5–2026–03–10

- Semantic deduplication

- Novelty gating

- Retrieval ranking

v2.6–2026–03–16

- Retrieval Brainstem

- Intent classification

- Capability tiers

v2.7–2026–03–18

- Rust installer

- 5-runtime converter

Scale: The project spans approximately 50,000+ lines of Rust code across 14 crates, with 45+ end-to-end tests, 144 CLI tests (bats), 44 development phases, and 135 implementation plans.

The core data structures (append-only RocksDB, TOC hierarchy, gRPC API) are stable. The Ranking Policy (Phase 16), Cognitive Retrieval (Phase 17), Episodic Memory (Phase 44), and Multi-Runtime Installer (Phase 49) are all complete. The current focus is continued production hardening and expanding runtime support.

Conclusion

Agent-memory and agent-brain solve different problems, and you will likely want both.

Agent-brain gives your AI agents library memory: knowledge about your codebase, your documentation, your architecture decisions captured in documents. Use it when you need your agent to answer “what does our codebase say about X?”

agent-memory gives your AI coding assistants episodic memory: knowledge about the conversations themselves. Use it when you need your agent to answer “what did we decide about X last week?” or “what was the approach we settled on for Y in March?” This is what persistent AI agent context looks like in practice.

The amnesia problem is real and expensive. Every session that starts from scratch is a session where your agent is missing months of accumulated context: your team’s coding preferences, the constraints you established, the procedures you developed, the decisions you made and why. This episodic memory for AI agents captures all of that automatically, organizes it intelligently, and retrieves it without scanning everything.

The technical choices reflect a coherent philosophy. Rust for reliability. RocksDB for embedded persistence. Candle for offline embeddings. gRPC for typed interfaces. TOC for navigable hierarchy. Grips for provenance. Every choice points toward a system built for long-term operation with minimal operational overhead. No servers to manage beyond the local daemon. No API keys for embeddings. No data leaving your machine.

If you use an AI coding agent daily, your agent is accumulating a working history that is currently being discarded at the end of every session. agent-memory is the system that keeps that history and makes it retrievable.

Key Takeaways

- agent-memory captures episodic memory for AI agents (conversation history); agent-brain captures library memory (documents and code). Both are necessary; neither replaces the other.

- The six-layer cognitive stack gives the AI agent memory system graceful degradation: the TOC hierarchy always works, and faster layers accelerate it when available.

- Salience detection classifies memory importance at write time, making high-signal content (constraints, definitions, procedures) decay-exempt and prioritized at retrieval.

- The append-only design keeps raw events forever; retention policies only prune search indexes, so you can always rebuild.

- Three multi-agent memory sharing strategies (separate stores, unified namespacing, team mode) cover the full range from strict isolation to cross-user knowledge sharing.

Next Steps

- This is not ready for prime time. This is ready for people to test and help develop and provide feedback. It is a work in progress.

- Install:

cargo install memory-daemonand runmemory-daemon installfor your agent runtime. - Configure: use the Claude Code memory plugin

/memory-setupfor a guided first-run wizard. - Verify: run

/memory-statusto confirm the daemon is running and hooks are active. - Explore: after a week of sessions, use

/topic-graphto browse what themes your sessions have covered. - Tune retention: run

memory-daemon admin cleanup --dry-runto preview what would be pruned before committing to a retention policy.

agent-memory is part of the broader ecosystem of tools giving AI coding agents persistent AI agent context. Combined with agent-brain for document retrieval and RuleZ for behavioral constraints, it forms a complete cognitive infrastructure for AI-powered development workflows.

About the Author

Rick Hightower is a technology executive and data engineer who led ML/AI development at a Fortune 100 financial services company. He created skilz, the universal agent skill installer, supporting 30+ coding agents including Claude Code, Gemini, Copilot, and Cursor, and co-founded the one of the world’s largest agentic skill marketplace (and one of the first). Connect with Rick Hightower on LinkedIn or Medium.

Rick has been actively developing generative AI systems, agents, and agentic workflows for years. He is the author of numerous agentic frameworks and developer tools and brings deep practical expertise to teams looking to adopt AI.