Understanding the Distinction Between Context Engineering and Harness Engineering for Reliable AI Systems

Are you still struggling with AI agents that fail 20% of the time? It’s time to rethink your approach! Discover the critical difference between Context Engineering and Harness Engineering and learn how to build reliable systems that truly deliver. Don’t just create demos — build production-ready agents! Read more to transform your AI setup!

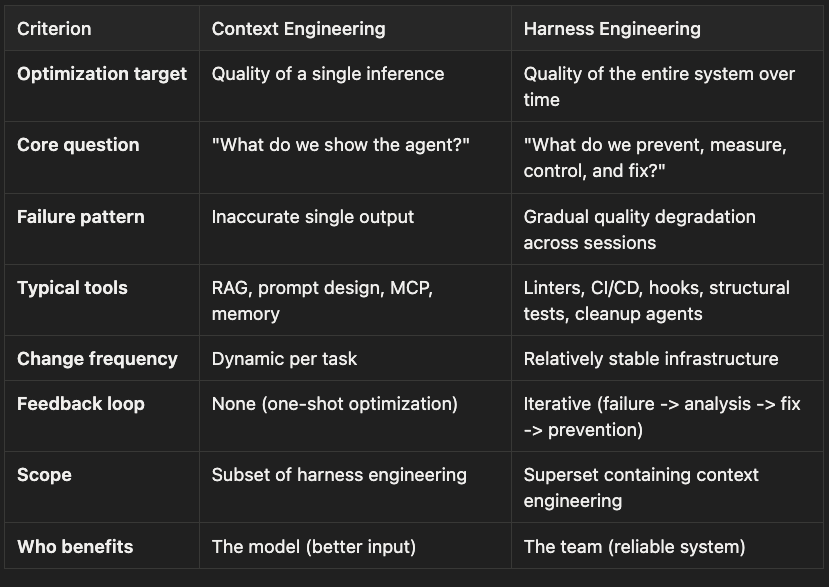

Context engineering focuses on optimizing what an AI model sees at inference time, while harness engineering encompasses the entire system design, ensuring reliability across multiple inferences. Context engineering is a subset of harness engineering. Harness engineering includes behavioral constraints, feedback loops, and quality gates. Effective harness design significantly improves performance, as evidenced by studies showing substantial performance gaps based on harness configurations. Both disciplines are essential, with context engineering providing necessary information and harness engineering ensuring consistent, reliable output over time.

You’ve spent weeks perfecting your RAG pipeline. Your prompts are chef’s-kiss. Your agent still fails 20% to 40% of the time in production. Sound familiar?

Here’s the uncomfortable truth most AI engineers learn the hard way: you’ve been optimizing the wrong layer. You’ve been doing context engineering without harness engineering, and wondering why your agents still can’t be trusted with real work.

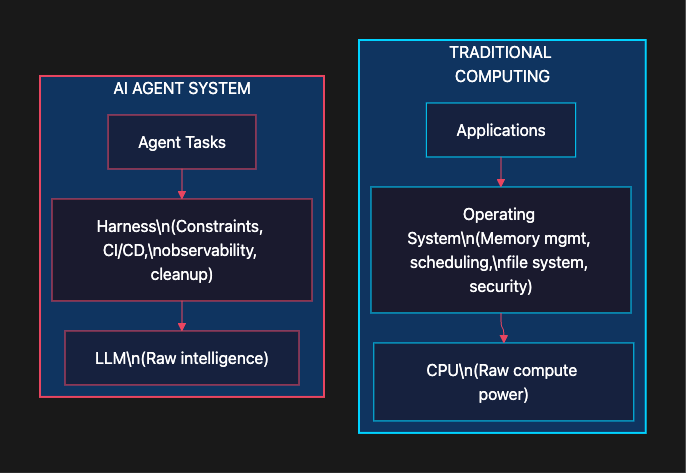

The model is the CPU. The harness is the OS. And right now, most teams are trying to run production workloads on bare metal with no operating system at all.

This article lays out both disciplines clearly, shows you exactly how they differ, and gives you the evidence and practical patterns to implement both. By the end, you’ll know whether you’re building a demo or a production system, and what it takes to close that gap.

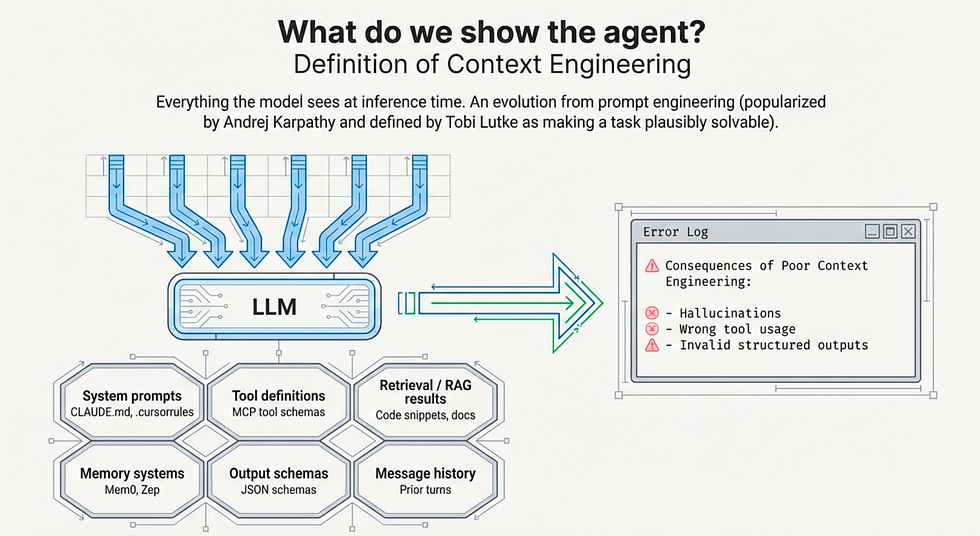

What is Context Engineering?

Context engineering is the design and management of everything the LLM sees at inference time. Every token that hits the model’s context window is a context engineering decision: the system prompt, the tool definitions, the RAG results, the message history, the output schemas, the memory from prior sessions.

Andrej Karpathy helped popularize the term as the successor to “prompt engineering.” It was an important reframe. “Prompt engineering” implied you were just crafting a clever instruction. Context engineering acknowledges that you’re actually designing an entire information environment. Shopify CEO Tobi Lutke offered a concise definition: “the art of providing all the context for the task to be plausibly solvable by the LLM.”

The question context engineering answers is straightforward: What do we show the agent?

That question has more depth than it appears. Consider what goes into a single inference:

Get any of these wrong and the model hallucinates, calls the wrong tool, or produces structurally invalid output. Context engineering is real engineering with real consequences.

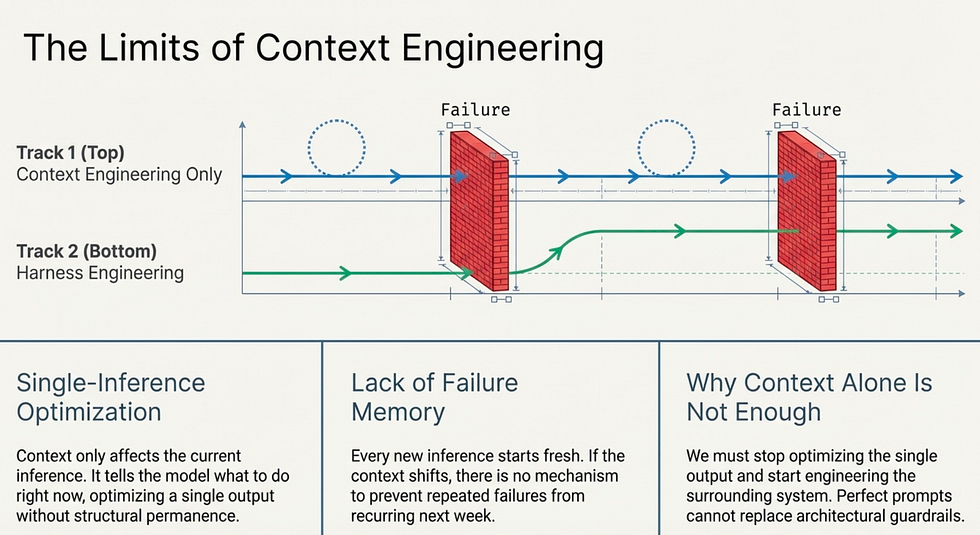

The Limits of Context Engineering

But here’s the thing: even perfect context engineering only optimizes a single inference. It tells the model what to do right now. It says nothing about what happens when the model gets it wrong, or how to prevent the same failure from recurring next week.

Context engineering also has no memory of its own failures. Every new inference starts fresh. If the model runs the wrong command on Monday, and you fix the prompt on Tuesday, there’s nothing stopping it from running the wrong command again on Thursday once the context shifts.

That’s where harness engineering comes in.

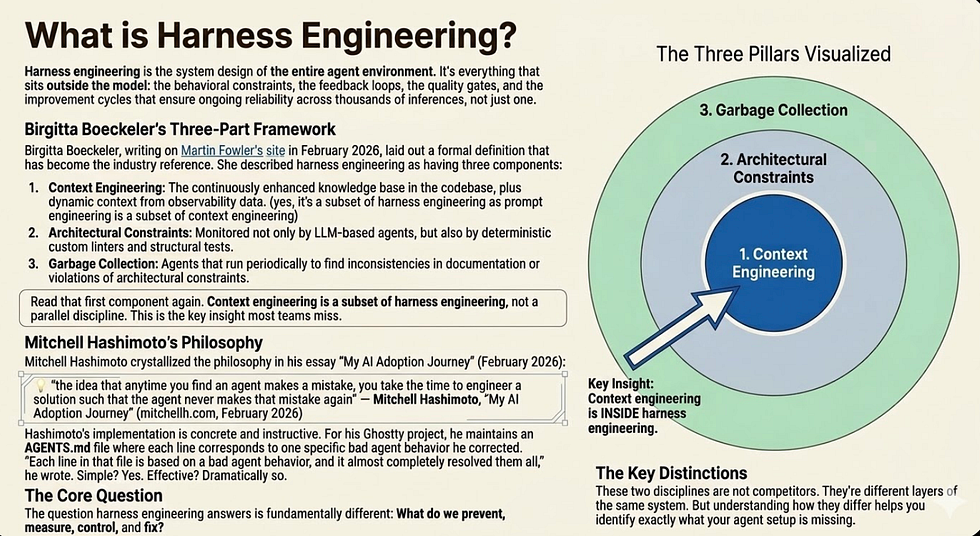

What is Harness Engineering?

Harness engineering is the system design of the entire agent environment. It’s everything that sits outside the model: the behavioral constraints, the feedback loops, the quality gates, and the improvement cycles that ensure ongoing reliability across thousands of inferences, not just one.

Birgitta Boeckeler, writing on Martin Fowler’s site in February 2026, laid out a formal definition that has become the industry reference. She described harness engineering as having three components:

- Context Engineering (yes, it’s a subset of harness engineering as prompt engineering is a subset of context engineering): The continuously enhanced knowledge base in the codebase, plus dynamic context from observability data

- Architectural Constraints: Monitored not only by LLM-based agents, but also by deterministic custom linters and structural tests

- Garbage Collection: Agents that run periodically to find inconsistencies in documentation or violations of architectural constraints

Read that first component again. Context engineering is a subset of harness engineering, not a parallel discipline. This is the key insight most teams miss.

Mitchell Hashimoto crystallized the philosophy in his essay “My AI Adoption Journey” (February 2026):

💡 “the idea that anytime you find an agent makes a mistake, you take the time to engineer a solution such that the agent never makes that mistake again” — Mitchell Hashimoto, “My AI Adoption Journey” (mitchellh.com, February 2026)

Hashimoto’s implementation is concrete and instructive. For his Ghostty project, he maintains an AGENTS.md file where each line corresponds to one specific bad agent behavior he corrected. “Each line in that file is based on a bad agent behavior, and it almost completely resolved them all,” he wrote. Simple? Yes. Effective? Dramatically so.

The question harness engineering answers is fundamentally different: What do we prevent, measure, control, and fix?

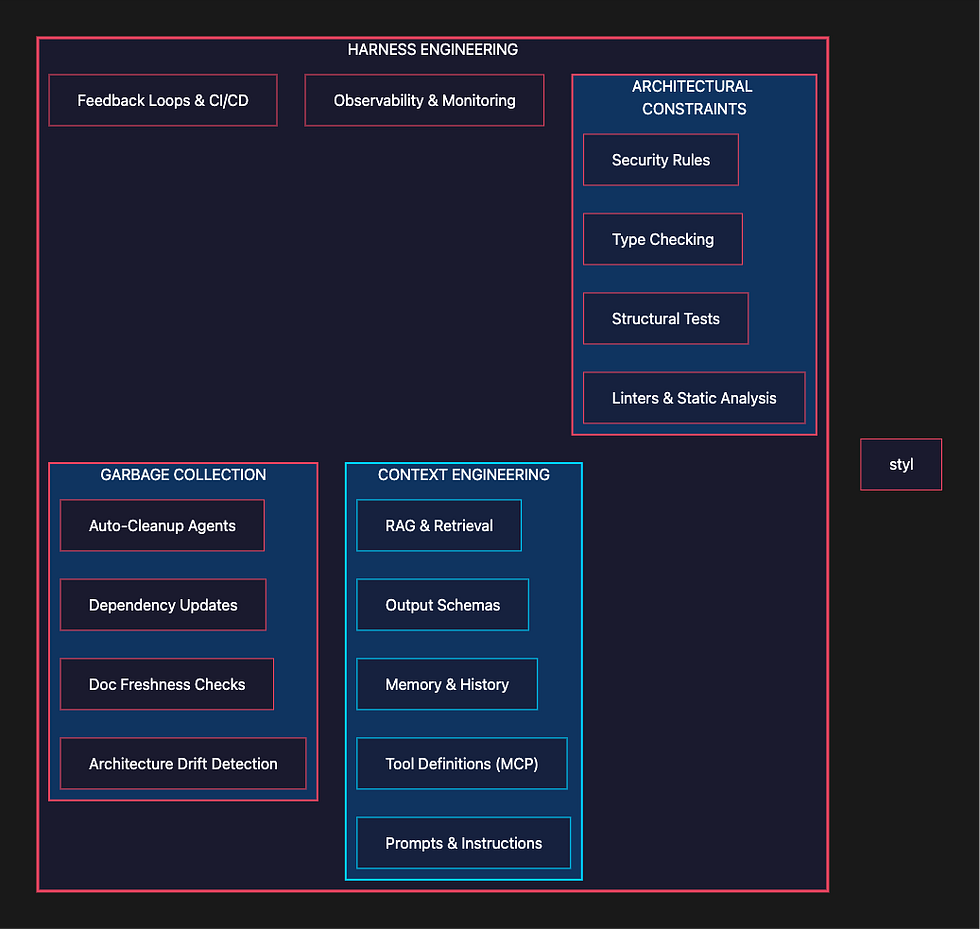

The Three Pillars of Harness Engineering

The nesting relationship between these disciplines is worth seeing explicitly:

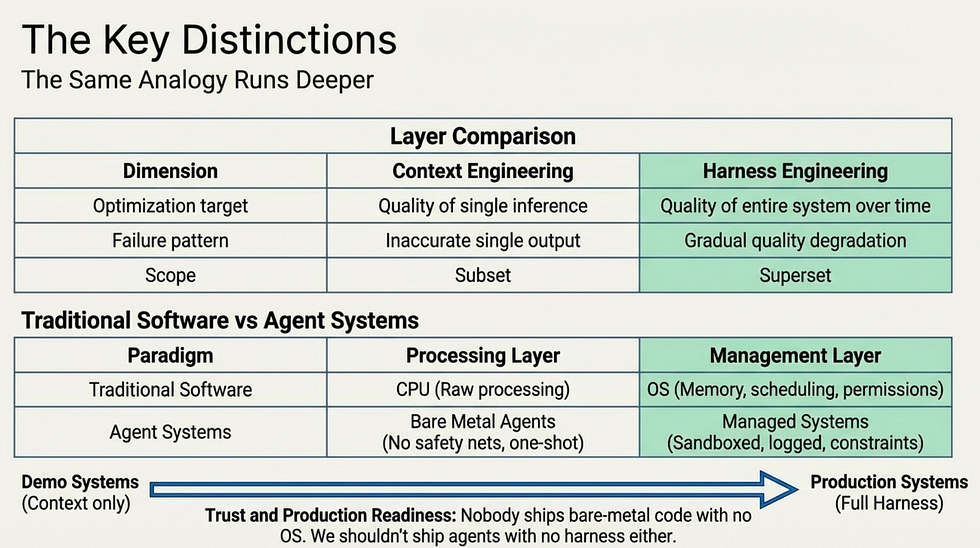

The Key Distinctions

These two disciplines are not competitors. They’re different layers of the same system. But understanding how they differ helps you identify exactly what your agent setup is missing.

The last row matters more than it looks. Context engineering helps the model produce better individual outputs. Harness engineering helps the team trust the model enough to let it do real work unsupervised.

That’s the difference between a demo and a production system.

The Same Analogy Runs Deeper

To make this concrete, consider how traditional software systems work versus AI agent systems:

Nobody ships bare-metal code with no OS. We shouldn’t ship agents with no harness either. The Harness is the Agent’s OS.

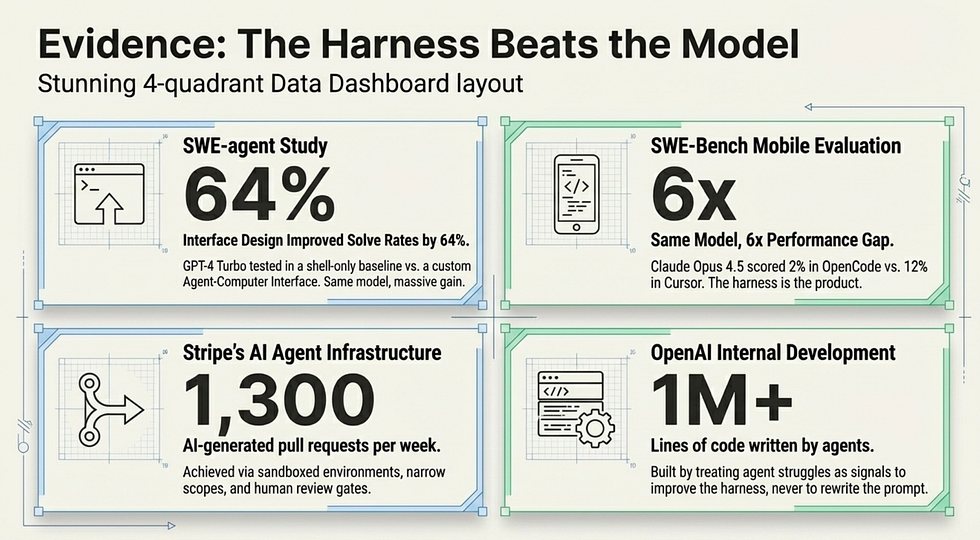

Evidence: The Harness Beats the Model

If you’re skeptical that harness design matters more than model capability, the research is unambiguous. These aren’t theoretical arguments. They’re controlled studies with hard numbers.

SWE-agent: Interface Design Alone Improved Solve Rates by 64%

The SWE-agent research from Princeton (accepted at NeurIPS 2024) ran a careful ablation study. Researchers took the same model (GPT-4 Turbo) and tested it in two harness configurations:

- Baseline: Shell-only interface, no custom editing tools

- SWE-agent: Custom Agent-Computer Interface with a purpose-built code editing tool

The result: the custom ACI improved solve rates by 64% relative to the shell-only baseline. Same model. Same task set. The only variable was the harness design.

(If you’re still blaming the model for your agent’s failures, you’re blaming the CPU for a kernel panic.)

SWE-Bench Mobile: Same Model, 6x Performance Gap

The 2026 SWE-Bench Mobile evaluation made the point even more starkly. The same model, Claude Opus 4.5, scored:

- 2% success rate in one agent harness (OpenCode)

- 12% success rate in a different agent harness (Cursor)

That’s a 6x performance gap on the same benchmark, with the same underlying model. The entire difference came from agent design: how the harness managed tool use, how it structured editing interfaces, and how it handled failure recovery.

You can’t close a 6x gap by tweaking prompts. The harness is the product.

Stripe: 1,300 AI-Written Pull Requests Per Week

Stripe’s AI agent infrastructure demonstrates harness engineering at scale. Their system generates 1,300 AI-written pull requests per week using:

- Narrow, well-defined task scopes (not “write me a feature” but “migrate this specific function to the new API”)

- Sandboxed runtime environments

- Parallel agent execution with independent contexts

- Human review gates before merge

- Precise specifications as input, not vague requirements

Every one of those is a harness engineering decision. The model doesn’t know it’s sandboxed. The model doesn’t know there’s a human review gate. The harness enforces these constraints deterministically, regardless of what the model decides to do.

OpenAI Internal: 1M+ Lines of Code, Zero Manually Typed

The Martin Fowler article describes an OpenAI team that built a product exceeding one million lines of production code without a single manually typed line. Their defining practice: they treated every instance where the agent struggled as a signal to improve the harness, not an invitation to try harder prompts.

That is harness engineering in a single sentence: the harness learns from the agent’s failures, so the agent doesn’t have to repeat them.

Practical Implementation Guide

Enough theory. Here’s how you implement both disciplines in your agent setup.

Stage 1: Context Engineering Basics

These are the components that optimize what the model sees. Start here if you’re building from scratch.

Instruction Files (CLAUDE.md, .cursorrules, AGENTS.md)

This is the simplest form of context engineering. You’re telling the model what to do by giving it project-specific rules in its context window:

# CLAUDE.md -- Context engineering in action

## Project Context

This is a FastAPI application using SQLAlchemy 2.0 with async sessions.

Always use `async with get_session() as session` for database access.

## Code Style

- Use Pydantic v2 model_validator, not v1 @validator

- Return 422 for validation errors, not 400

- All endpoints need OpenAPI docstrings

What it does: loads project conventions directly into the model’s context at inference time. Why this works: the model now has the project-specific rules it needs to make correct decisions, rather than guessing from general training. The limitation: this only affects inferences where these instructions are loaded. It doesn’t prevent violations or correct drift.

RAG and Retrieval Pipelines

Semantic search over your codebase, API docs, or domain knowledge gives the model relevant context for the specific task at hand. RAG solves the problem of the model knowing about your domain in general but not your specific implementation details. Instead of stuffing everything into the system prompt, you retrieve the most relevant chunks at query time.

Model Context Protocol (MCP)

Anthropic’s MCP has become the standard for tool and context interoperability in 2026. It lets agents discover and use tools dynamically, with structured schemas that guide tool selection. Think of it as JDBC but for AI tools; a standard adapter layer that lets any agent work with any compliant tool server.

Memory Systems (Mem0, Zep, Agent Brain and Agent Memory)

Persistent context across sessions means the model remembers prior decisions, user preferences, and project-specific patterns. Without memory, every session starts cold. With memory, the agent accumulates project-specific knowledge over time.

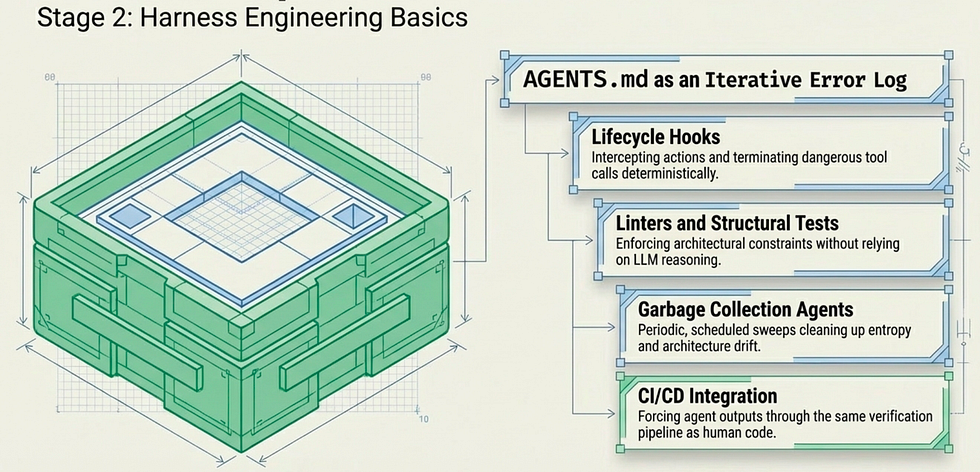

Stage 2: Harness Engineering Basics

Once you have context engineering in place, layer on the harness. These components ensure the system works reliably over time, not just on the first try.

AGENTS.md as Iterative Error Log

Following Hashimoto’s pattern, treat your AGENTS.md as a living document where each line prevents a specific past failure:

# AGENTS.md -- Harness engineering in action

- Never run `rm -rf` on any directory without explicit user confirmation

- Always run tests before committing; do not commit if tests fail

- Use `uv` for Python package management, not pip directly

- Database migrations must be reviewed; never auto-apply in production

- When modifying API endpoints, always update the OpenAPI specWhat it does: each line is a constraint added after a real failure. The first time the agent deletes the wrong directory, you add that rule. It never happens again. Why this is harness engineering and not just context engineering: the AGENTS.md is itself context engineering (it’s loaded into the model’s context), but the practice of treating failures as signals to update it is harness engineering. You’re building a feedback loop, not just writing better prompts.

Lifecycle Hooks

Claude Code provides hooks that intercept tool calls before and after execution:

{

"hooks": {

"PreToolUse": [{

"matcher": "Bash",

"hook": "echo 'BLOCKED' && exit 1",

"description": "Block dangerous shell commands"

}]

}

}What it does: the hook intercepts the Bash tool call and terminates it before execution if the command matches a blocked pattern. Why this is better than a prompt rule: deterministic enforcement. The model doesn’t need to “remember” not to run dangerous commands. The harness prevents it regardless of what the model decides. This is architectural constraints in practice.

Linters and Structural Tests

Run these after every agent action, not just at CI time:

# Structural test: verify all API endpoints have corresponding tests

find src/api -name "*.py" | while read f; do

test_file="tests/$(basename $f)"

if [ ! -f "$test_file" ]; then

echo "FAIL: No test file for $f"

exit 1

fi

doneWhat it does: enforces an architectural constraint (every API file has a test file) in a deterministic, non-LLM way. The agent can’t reason its way around this check. Either the test file exists or the build fails.

Garbage Collection Agents

Periodic agents clean up entropy before it compounds:

- Check for stale documentation that references outdated APIs

- Detect architecture drift (new patterns not matching established conventions)

- Remove unused imports, dead code, and orphaned test fixtures

- Verify dependency versions match lock files

Garbage collection is the most overlooked component of harness engineering. Every long-lived codebase accumulates entropy: docs that no longer match the code, configs that reference deleted services, tests that pass for the wrong reasons. A garbage collection agent runs on a schedule, finds these inconsistencies, and either fixes them or flags them for review.

CI/CD Integration

Agent outputs go through the same pipeline as human code: linting, testing, security scanning, review. The harness doesn’t trust the agent. It verifies the agent, every time.

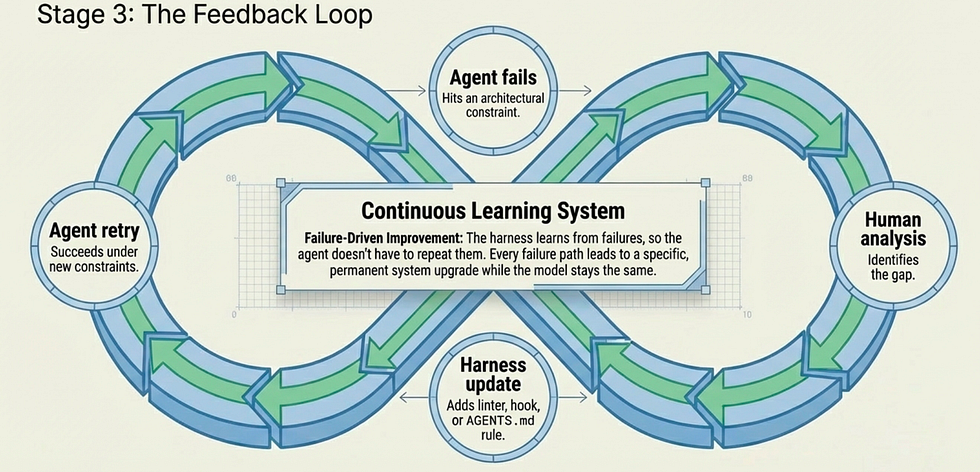

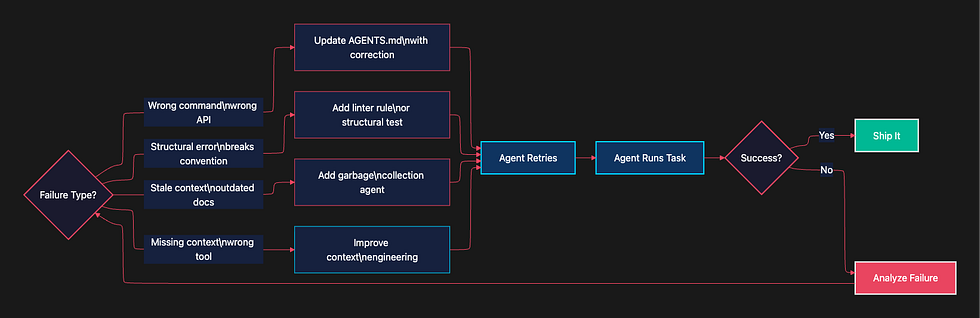

Stage 3: The Feedback Loop

The feedback loop is what turns a set of harness components into a self-improving system. Here’s how it works:

Notice that every failure path leads to a different type of harness update. Wrong command? Update the instruction file. Structural error? Add a linter. Stale docs? Add a garbage collection agent. Missing context? Improve context engineering. Each failure teaches the harness something new. The harness doesn’t forget.

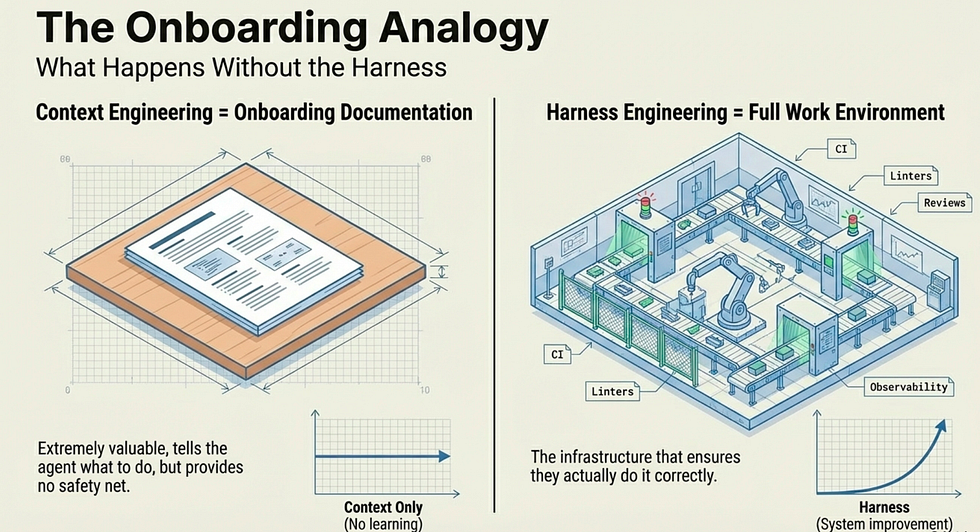

The Onboarding Analogy

Think of it this way.

Context engineering is giving a new hire a perfect onboarding document. It explains the codebase, the conventions, the tools, and the team’s preferences. A good onboarding document is enormously valuable. Nobody disputes that.

Harness engineering is the full work environment: the linter that catches style violations before code review, the CI pipeline that runs tests on every push, the architecture decision records that explain why things are the way they are, the observability stack that alerts when something goes wrong, and the code review process that catches what automated tools miss.

You wouldn’t hand a new developer your entire codebase with no linter, no CI, and no code review, and expect production-quality code. Why do we do exactly this with AI agents?

The onboarding document (context engineering) tells them what to do. The work environment (harness engineering) ensures they actually do it correctly, and keeps them on track as the project evolves and entropy accumulates.

What Happens Without the Harness

Here’s what the difference looks like operationally:

Without a harness, the only feedback is “it worked” or “it didn’t.” With a harness, every failure teaches the system something. That’s the fundamental difference.

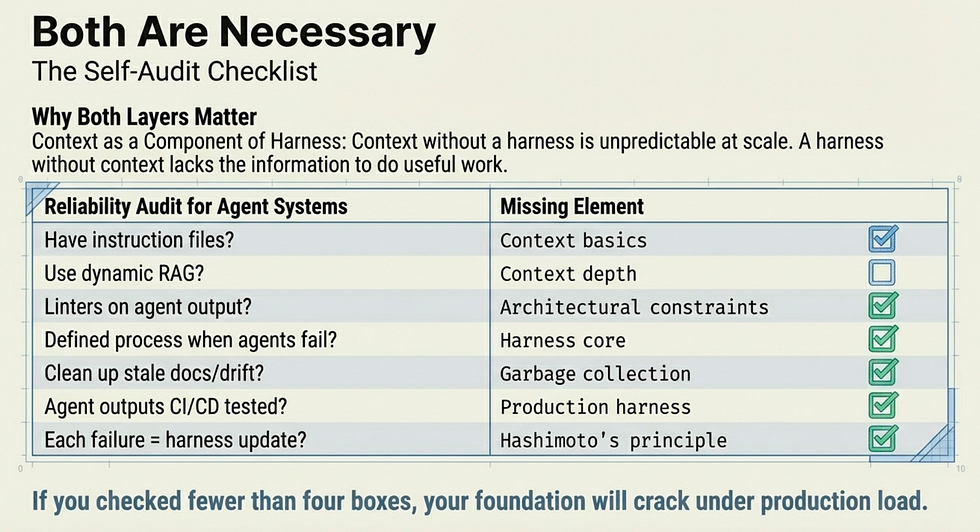

Both Are Necessary

Let me be direct: you need both. Context engineering without harness engineering gives you an agent that’s brilliant on its first try and unpredictable on its hundredth. Harness engineering without context engineering gives you a beautifully constrained agent that doesn’t have enough information to do useful work.

The relationship is straightforward: context engineering is a component of harness engineering, not a competing discipline. If you’re only doing context engineering, you’re running on bare metal. If you’re doing harness engineering, you’re necessarily doing context engineering plus everything else.

The Self-Audit Checklist

Here’s a quick audit for your current agent setup:

If you checked fewer than four boxes, you’re building agents on a foundation that cracks under production load. The model isn’t the problem. The harness is.

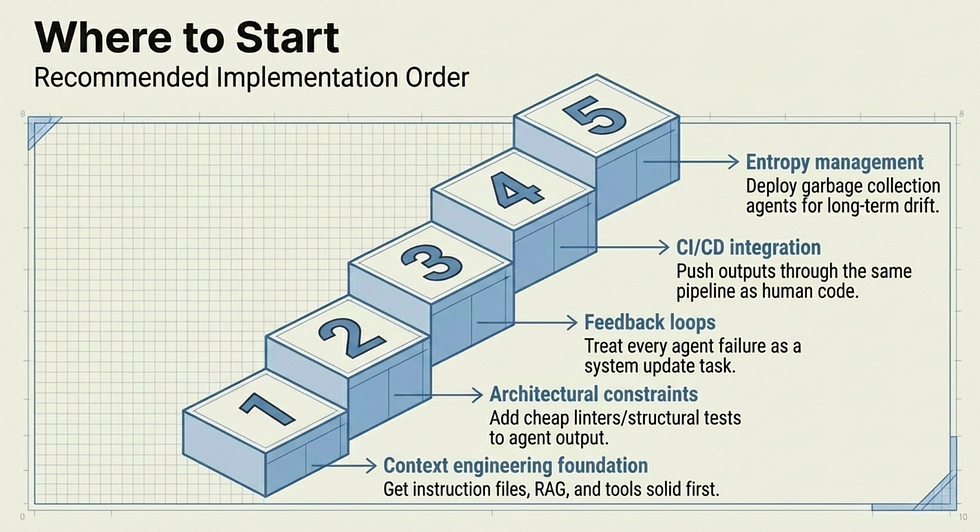

Where to Start

If you’re building from scratch, invest in this order:

- Context engineering first. Get your instruction files, RAG pipeline, and tool definitions solid. You need the foundation before you build the structure.

- Add architectural constraints. Start with linters and structural tests that run on every agent output. These are cheap to add and catch a lot of failures.

- Build the feedback loop. Treat every agent failure as a harness engineering task. Add a rule, add a test, or add a garbage collection check. Don’t just fix the output; fix the system.

- Add CI/CD integration. Agent outputs should go through the same pipeline as human code. No exceptions for production workloads.

- Entropy management last. Once you have failures being captured and prevented, add periodic garbage collection agents to handle the drift that accumulates even when individual inferences are correct.

Q&A Review

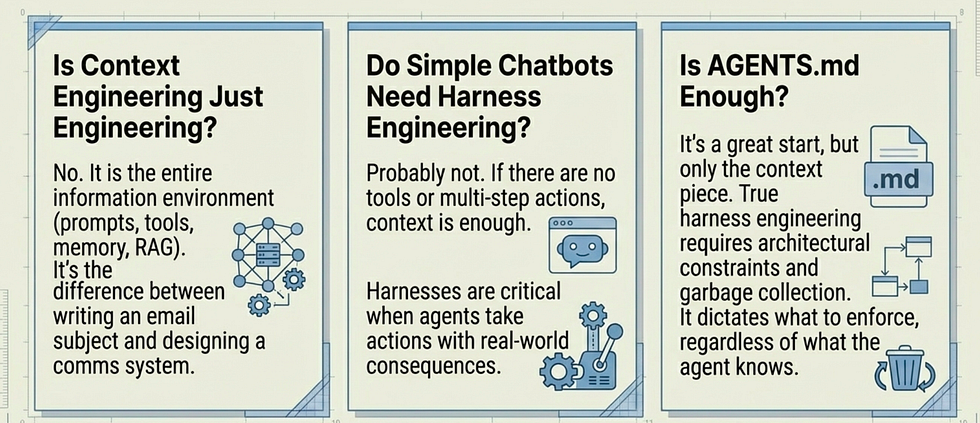

Q: Is context engineering just prompt engineering with a fancier name?

A: No. Prompt engineering is one component of context engineering. Context engineering encompasses everything the model sees at inference time: prompts, tool definitions, RAG results, memory, output schemas, and message history. It’s the difference between writing a good email subject line and designing an entire communication system.

Q: Do I need harness engineering for simple chatbots?

A: Probably not. If your agent does one thing in one context with no tools, context engineering is likely sufficient. Harness engineering becomes critical when agents take actions, use tools, operate across sessions, or need to maintain reliability at scale. The more real-world consequences your agent has, the more the harness matters.

Q: Is AGENTS.md enough for harness engineering?

A: It’s a great start, but it covers only the context engineering portion of harness engineering. True harness engineering also requires architectural constraints (linters, structural tests), garbage collection (entropy management), feedback loops (CI/CD), and observability. AGENTS.md addresses “what to tell the agent.” The full harness addresses “what to enforce regardless of what the agent knows.”

Q: Which should I invest in first?

A: Context engineering. You need the foundation before you build the structure. Get your instruction files, RAG pipeline, and tool definitions solid. Then layer on the harness: add linters that check agent output, build a feedback loop for failures, and start treating every agent mistake as a harness engineering opportunity. The order matters.

Q: Can a great harness compensate for a weaker model?

A: The evidence says yes, dramatically so. SWE-Bench Mobile showed a 6x performance gap between different harnesses using the same model. Stripe runs 1,300 PRs per week with narrow task scopes and sandboxed execution. The harness design is doing more work than the model capability. For production reliability, the harness matters more than the model tier. That said, the best systems pair a capable model with a well-designed harness. One doesn’t replace the other.

Q: Who coined “harness engineering” and where does the term come from?

A: It’s a distributed coinage. Mitchell Hashimoto used it in his February 2026 essay to describe his AGENTS.md-based iterative error prevention approach. Birgitta Boeckeler on Martin Fowler’s site published a formal three-component definition the same month. LangChain defined “Agent = Model + Harness” in their “Anatomy of an Agent Harness” post. All three are worth reading. The concept converged from multiple practitioners independently arriving at the same conclusion: context alone isn’t enough.

References

- Mitchell Hashimoto, “My AI Adoption Journey,” mitchellh.com, February 2026. https://mitchellh.com/writing/my-ai-adoption-journey

- Birgitta Boeckeler, “Harness Engineering,” martinfowler.com, February 17, 2026. https://martinfowler.com/articles/exploring-gen-ai/harness-engineering.html

- Vivek Trivedy (LangChain), “The Anatomy of an Agent Harness.” https://blog.langchain.com/the-anatomy-of-an-agent-harness/

- John Yang et al. (Princeton), “SWE-agent: Agent-Computer Interfaces Enable Automated Software Engineering,” NeurIPS 2024. https://arxiv.org/abs/2405.15793

- SWE-Bench Mobile evaluation, 2026. https://arxiv.org/html/2602.09540v1

- MindStudio, “Stripe’s AI Agent Infrastructure,” 2026. https://www.mindstudio.ai/blog/what-is-ai-agent-harness-stripe-minions-2

- Phil Schmid, “Context Engineering,” philschmid.de, June 30, 2025. https://www.philschmid.de/context-engineering

- LangChain, “The Rise of Context Engineering.” https://blog.langchain.com/the-rise-of-context-engineering/

About the Author

Rick Hightower is a technology executive and data engineer who led ML/AI development at a Fortune 100 financial services company. He created skilz, the universal agent skill installer, supporting 30+ coding agents including Claude Code, Gemini, Copilot, and Cursor, and co-founded the world’s largest agentic skill marketplace. Connect with Rick Hightower on LinkedIn or Medium.

Rick has been actively developing generative AI systems, agents, and agentic workflows for years. He is the author of numerous agentic frameworks and developer tools and brings deep practical expertise to teams looking to adopt AI.

No comments:

Post a Comment